Large foundation models have dominated public attention in artificial intelligence due to their broad capabilities, massive training datasets, and impressive performance across many tasks. However, a parallel shift is underway. Smaller, specialized AI models are increasingly competitive by focusing on efficiency, domain expertise, and practical deployment advantages. Rather than replacing foundation models, these compact systems are reshaping how organizations think about performance, cost, and real-world impact.

What Characterizes Compact, Purpose-Built AI Models

Smaller, specialized models are designed with a narrow or clearly defined purpose. They typically have fewer parameters, are trained on curated datasets, and target specific industries or tasks such as medical imaging, legal document review, supply chain forecasting, or customer support automation.

Essential features comprise:

- Lower computational requirements during training and inference

- Domain-specific training data instead of broad internet-scale data

- Optimized architectures tuned for particular tasks

- Easier customization and faster iteration cycles

These features allow specialized models to compete not by matching the breadth of foundation models, but by outperforming them in focused scenarios.

Efficiency as a Competitive Advantage

Smaller models stand out for their high efficiency, whereas large foundation models typically demand substantial computational power, dedicated hardware, and considerable energy use. By comparison, compact models operate smoothly on conventional servers, edge devices, and even mobile hardware.

Industry benchmarks indicate that a well‑tuned domain‑specific model with fewer than one billion parameters can equal or surpass the task performance of a general‑purpose model containing tens of billions of parameters when assessed on a targeted challenge. This leads to:

- Decreased inference expenses for each query

- Shorter response times suitable for live applications

- Diminished environmental footprint thanks to lower energy consumption

When companies run large-scale operations, such savings can have a direct impact on their profitability and long-term sustainability objectives.

Specialized Expertise Surpasses General Knowledge

Foundation models excel at general reasoning and language understanding, but they can struggle with nuanced domain-specific requirements. Specialized models gain an edge by learning from carefully labeled, high-quality datasets that reflect real operational conditions.

Examples include:

- Medical systems developed solely from radiology imaging surpassing broad vision models when identifying diseases at early stages

- Financial risk systems centered on transaction dynamics delivering improved fraud detection compared to general-purpose classifiers

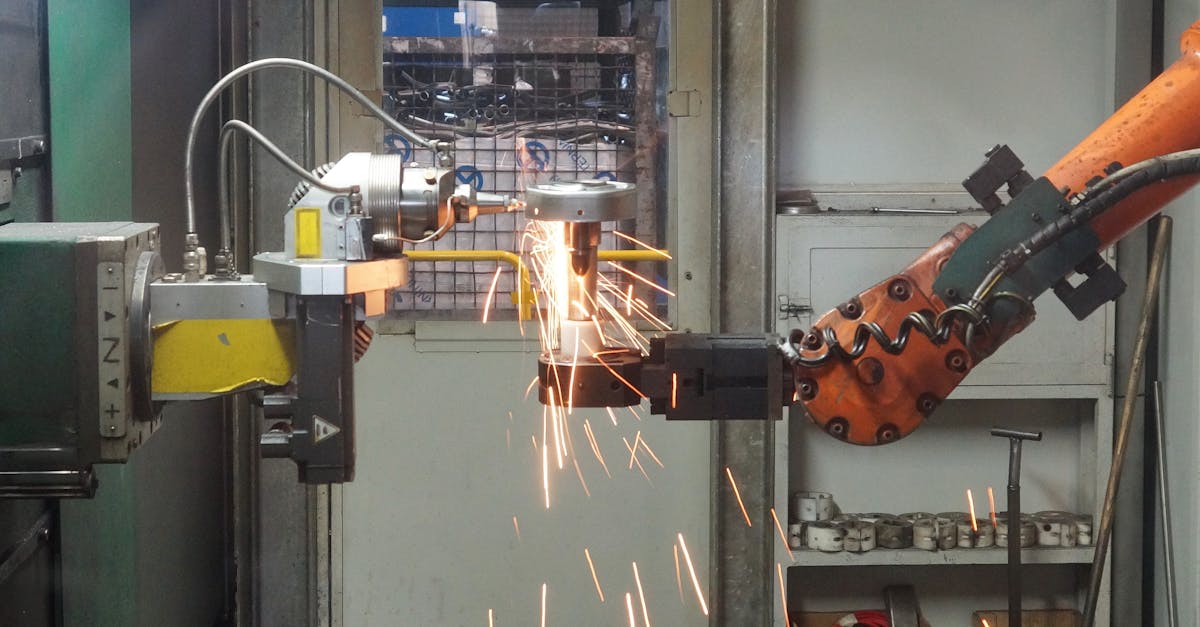

- Manufacturing inspection solutions spotting defects that wide-spectrum vision models frequently overlook

By narrowing the learning scope, these models develop deeper expertise and more reliable outputs.

Tailored Enterprise Solutions with Enhanced Oversight

Organizations are placing growing importance on maintaining oversight of their AI systems, and compact models can be fine-tuned, examined, and managed with greater ease, which becomes crucial in regulated sectors where clarity and interpretability remain vital.

Among the advantages are:

- Easier to interpret the model thanks to its streamlined structure

- Quicker retraining processes when updates arise in data or regulatory frameworks

- Stronger consistency with internal guidelines and compliance standards

Enterprises may deploy these models within their own infrastructure or private clouds, limiting potential data privacy exposure linked to large foundation models operated externally

Speed of Deployment and Iteration

Rapid time-to-value matters in highly competitive markets, yet preparing or customizing a foundation model may demand weeks or even months and depend on specialized expertise, while smaller models, in contrast, can frequently be trained or fine-tuned within just a few days.

This speed enables:

- Rapid experimentation and prototyping

- Continuous improvement based on user feedback

- Faster response to market or regulatory changes

Startups and mid-sized companies benefit especially from this agility, allowing them to compete with larger organizations that rely on slower, more resource-intensive AI pipelines.

Economic Accessibility and Democratization

The high cost of developing and operating large foundation models concentrates power among a small number of technology giants. Smaller models reduce barriers to entry, making advanced AI accessible to a broader range of businesses, research groups, and public institutions.

Economic effects encompass:

- Lower upfront investment in infrastructure

- Reduced dependence on external AI service providers

- More localized innovation tailored to regional or sector-specific needs

This change fosters a broader and more competitive AI landscape instead of reinforcing a winner-takes-all scenario.

Hybrid Strategies: Emphasizing Collaboration Over Complete Substitution

Competition is not necessarily adversarial; many organizations adopt blended strategies where foundation models offer broad capabilities while smaller, purpose-built models manage vital tasks.

Common patterns include:

- Leveraging a core language comprehension model alongside a dedicated system designed for decision processes

- Transferring insights from extensive models into compact versions optimized for deployment

- Integrating broad reasoning capabilities with validation layers tailored to specific domains

These strategies draw on the advantages of both methods while reducing their respective drawbacks.

Limitations and Trade-Offs

Smaller models are not universally superior. Their narrow focus can limit adaptability, and they may require frequent retraining as conditions change. Foundation models remain valuable for tasks requiring broad context, creative generation, or cross-domain reasoning.

The competitive balance is shaped by the specific use case, the availability of data, and practical operational limits rather than being dictated solely by model size.

The Coming Era of AI Rivalry

The emergence of more compact specialized AI models reflects a sector reaching maturity, where performance outweighs sheer magnitude. As organizations emphasize efficiency, reliability, and deep domain insight, these models demonstrate that intelligence is defined not merely by scale but by precision and execution. AI competition will likely evolve through deliberate blends of broad capability and targeted expertise, yielding systems that remain not only powerful but also practical and accountable.